With each new generation of processor, performance gains are a natural expectation but the 4th Generation Intel Xeon Scalable Processor, formally named Sapphire Rapids, takes a completely new approach to processor design that delivers greater performance and hardened security both of which are critically important to decision makers deploying servers for various markets including AI, analytics, storage, networking, data center, HPC, and enterprise.

The 4th Generation Intel Xeon Scalable Processor is based on a completely new architecture that uses a multi-unit design consisting of four tiles connected by Intel’s embedded bridge technology. Although the processor acts as one SOC, each thread has access to all the resources on all tiles which provides consistently low latency across the entire chip.

What’s Inside

- New Tile Architecture on Intel 7 Manufacturing Node

- Increased Core Count, Up to 56

- Integrated and New Accelerator Engines

- Improved I/O

- Compute Express Link (CXL) 1.1

- PCIe Gen 5

- UPI 2.0

- Improved Memory with DDR5 Support

- Specific HBM (High Bandwidth Memory) SKUs

- Optane Support

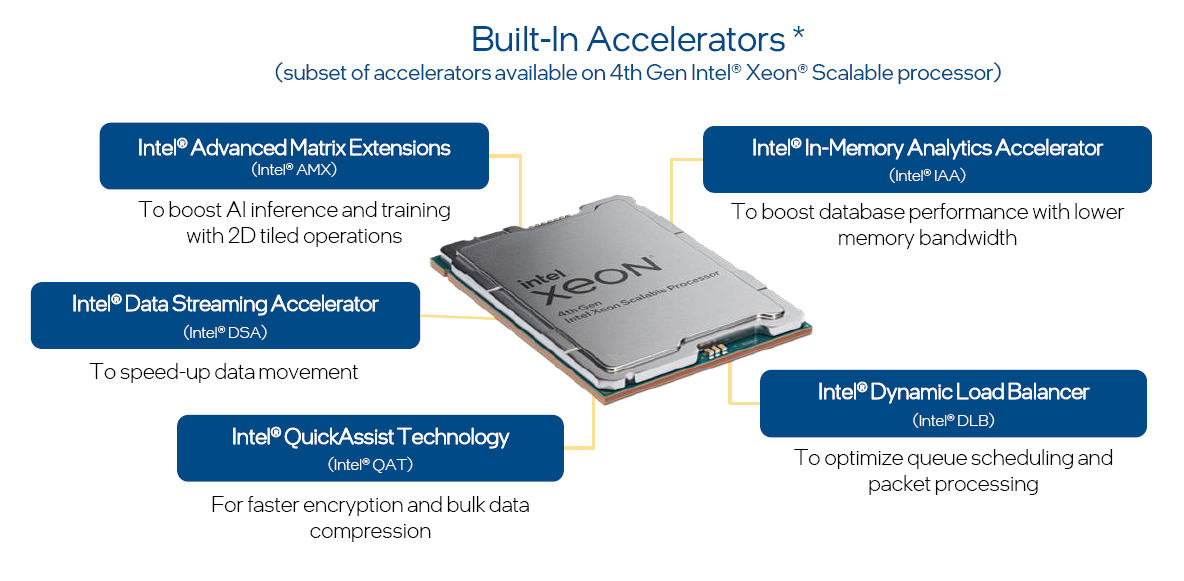

To improve overall processor efficiency and security, the 4th Generation Intel Xeon processor leverages specific accelerators that are integrated with the chip on the four different tiles. These accelerators allow highly compute intensive tasks that would have consumed CPU resources to be offloaded to the accelerator which dramatically improves core utilization and performance.

Why Accelerators Matter

It is predicted that by 2025, 90% of all enterprise applications will include some level of AI. Additionally, in the past year nearly 63% of all enterprises were breached to some extent so designing and integrating accelerators that help boost AI performance and help bring a zero trust security strategy to life while increasing overall performance make the 4th Generation Intel Xeon Scalable Processor the best and most flexible choice no matter the deployment path.

Click Accelerator Buttons to Learn More:

4th Generation Intel Xeon Scalable Processor: Why Accelerators Matter

Intel® Advanced Matrix Extensions

Intel Advanced Matrix Extensions: AI workloads require strict accuracy and high amounts of compute resources so the addition of accelerators can have a dramatic impact on overall performance. AI applications come in three types including Floating Point 32, Bfloat 16 and INT8. With the release of the 3rd Generation Intel Xeon Scalable Processor, Intel introduced AVX-512 which was an AI accelerator specifically designed for FP32 applications. The benefits were significant and have been enhanced on the 4th Gen Intel Xeon with the introduction of Intel AMX (Advanced Matrix Extensions) which is an AI accelerator for BFloat16 and INT8. The combination of AVX-512 and Intel AMX provide on chip accelerators for all three AI data types.

Intel Data Streaming Accelerator

Intel Data Streaming Accelerator: Moving data is essential in order to analyze it and put it to use to drive business decisions. Intel DSA is designed to drive high performance storage, networking and data intensive workloads by offloading the most common data moving tasks. Intel DSA helps speed up data movement across the CPU, memory, and caches as well as attached storage and network devices.

Intel Quick Assist Technology

Intel Quick Assist Technology: In many use cases today, data encryption is an absolute requirement in order to secure data in flight, at rest and in use. The encryption coding and decoding of data is extremely intensive and can take a significant amount of CPU resources. Intel QAT, which was introduced with the 3rd Gen Intel Xeon processor but is now integrated on chip with the 4th Gen Intel Xeon processor, accelerates cryptography, private key protection, and data compression.

Intel In-Memory Analytics Accelerator

Intel In-Memory Analytics Accelerator: Intel IAA allows you to run database and analytics workloads faster, with potentially greater power efficiency. Intel® In-Memory Analytics Accelerator (Intel® IAA) increases query throughput and decreases the memory footprint for in-memory database and big data analytics workloads. Intel IAA is ideal for in-memory databases, open source databases and data stores like RocksDB, Redis, Cassandra, and MySQL.

Intel Dynamic Load Balancer

Intel Dynamic Load Balancer: Intel® DLB enables the efficient distribution of network processing across multiple CPU cores/threads and dynamically distributes network data across multiple CPU cores for processing as the system load varies. Intel DLB also restores the order of networking data packets processed simultaneously on CPU cores.

Availability of accelerators varies depending on SKU. Visit the Intel Product Specifications page for additional product details.